Rephora

Rephora is a redesign of shopping perfumes on Sephora's website medium. Our research question was: How can we enhance the online purchasing buying process to enable customers to make in-store decisions virtually? Provided the sensory nature of fragrance, we evaluated several ideas to create a more robust and informative online process to convey information over a virtual medium.

Methods

Observations, Semi-structured interviews,

Survey, Contextual Interviews, Usability testing,

Task based analysis and Cognitive Walkthrough

Responsibilities (UX Researcher)

Qualitative research & data analysis,

Wireframing,

Usability Testing

1. Understanding the Problem

Background - Market Research & Literature Review

While ecommerce has increased the convenience of shopping in many industries, it poses new challenges to the cosmetic and perfume stores – how can the modality of smelling a perfume be digitized? Sephora, specifically, has worked to differentiate itself from competitors by introducing technology into their business solutions. For example, Sephora invested in Augmented Reality to help customers try on cosmetic products remotely. However, there is currently no mainstream technology solution to digitize the experience of smelling a perfume.

Given that a large population of Sephora buyers, in general and for perfumes, are tech-savvy millennials, it is important that there should be some amount of parity between in-store and online modes of purchase. This is especially true when these shoppers prefer to purchase in-store because of the comprehensible service that is being provided, including the opportunity to try-on the perfume at store - the most influential in-store promotional activity on purchasing behavior. In fact, sixty-one percent of Sephora shoppers buy from both channels given the difference in experiences. In the modern world, where there is lack of time to travel and increased reliance on technology, we are interested in looking at ways to enhance the online decision-making process for purchasing a perfume. By addressing the mentioned issues with fragrance purchasing in a virtual environment, we hope to give more power to customers, saving time and cognitive distress, as well as extend these findings to other sensory dominant experiences.

2. Research

Context Research - Market analysis, observations, semi-structured interviews, task analysis

Much contextual research has been done for this project. After performing a market analysis, observations, semi-structured interviews, and task analysis for each of Sephora’s platforms - in-store, website, and mobile app - we decided to focus specifically on Sephora’s online fragrance purchasing process.

Online fragrance purchasing has many perceived benefits, including direct and easy access to real user reviews and a larger perfume selection than available in-store. However, our research highlighted several key gaps between the in-store and online fragrance buying experience at Sephora, with most users viewing the in-store experience as superior. Observed in-store benefits include: 1) a reduced cognitive load given that professional associates are able to provide suggestions based on knowledge of the perfume base, 2) users may physically smell the products, and 3) users may request samples to take home.

USER GROUPS

Sephora fragrance customers, perfume enthusiasts, perfume experts.

Observations

Observations were done at the Sephora stores at Ponce city market and Buckhead in Atlanta. More than 20 perfume buyers were observed across both the stores and notes were taken.

RECRUITMENT

Recruitment occurred in Atlanta. I recruited in-person by talking to acquaintances, snow-balled through friends and posted messages on social media to have conversations with fragrance lovers and sephora customers.

INDIVIDUAL INTERVIEWS

We conducted 6 in-person interviews, 3 remote interviews, and 2 in-store interviews.

Research Goal

To Understand how to help users make more informed decisions while purchasing a perfume over the internet.

Research Questions

What are the issues faced by users when buying perfume?

How do users cope with these issues?

How do users decide which perfume to choose without smelling it?

Empathy Map

To better understand how a broad range of customers feel about their fragrance shopping experience with Sephora, we generated an empathy map based on user comments left on Sephora.com. 92 online comments in Sephora perfume websites are collected from the best selling value set, full size perfume, rollerball perfume and allure recommendations.

User's current pain points include a mismatch between image and the size of the actual product, the incomplete information like lasting time or unavailability to know the smell before they purchase, etc. But they could gain social acknowledgement, self-acknowledgement, good mood and so on if they find a right perfume for themselves or for others.

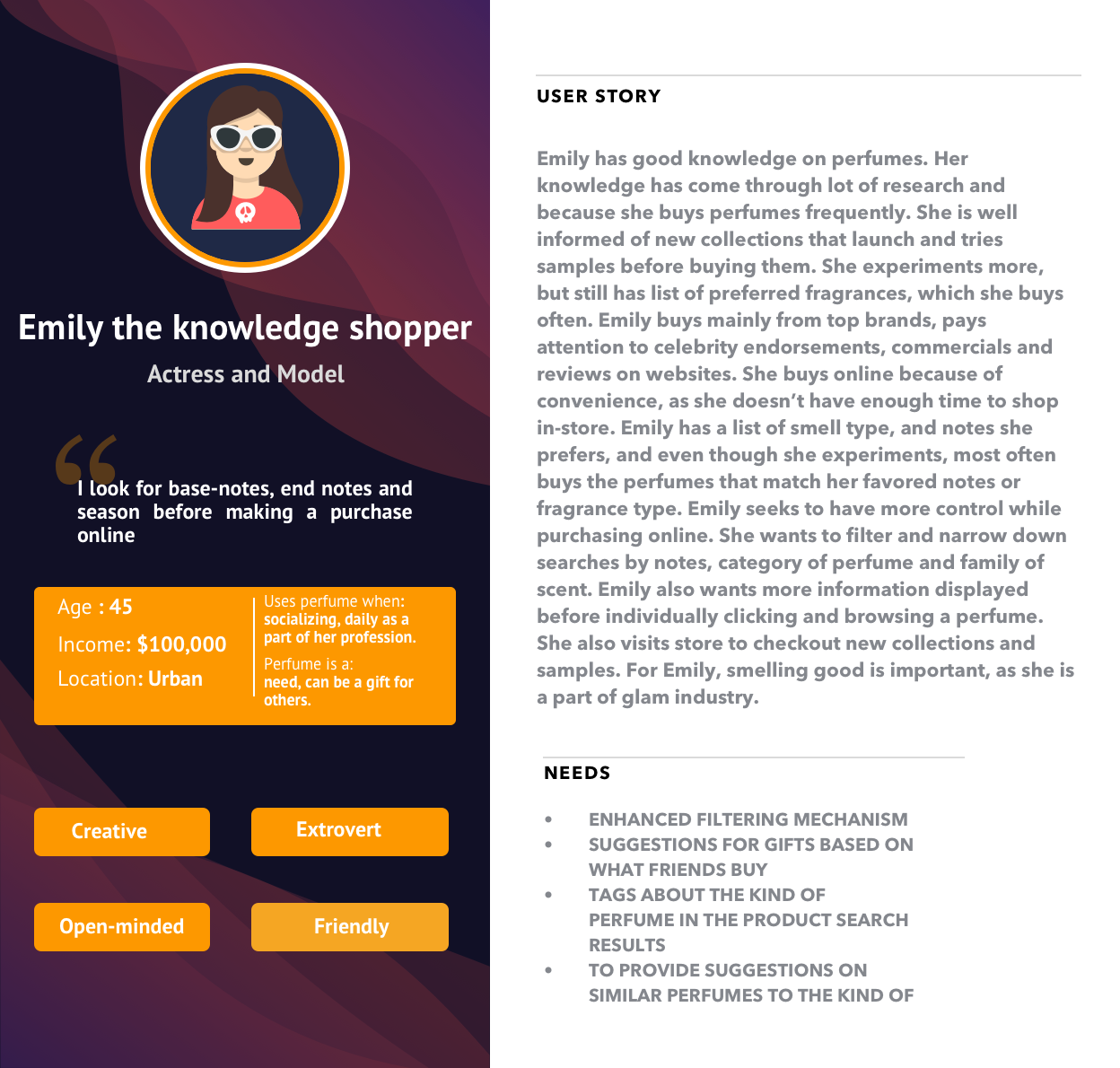

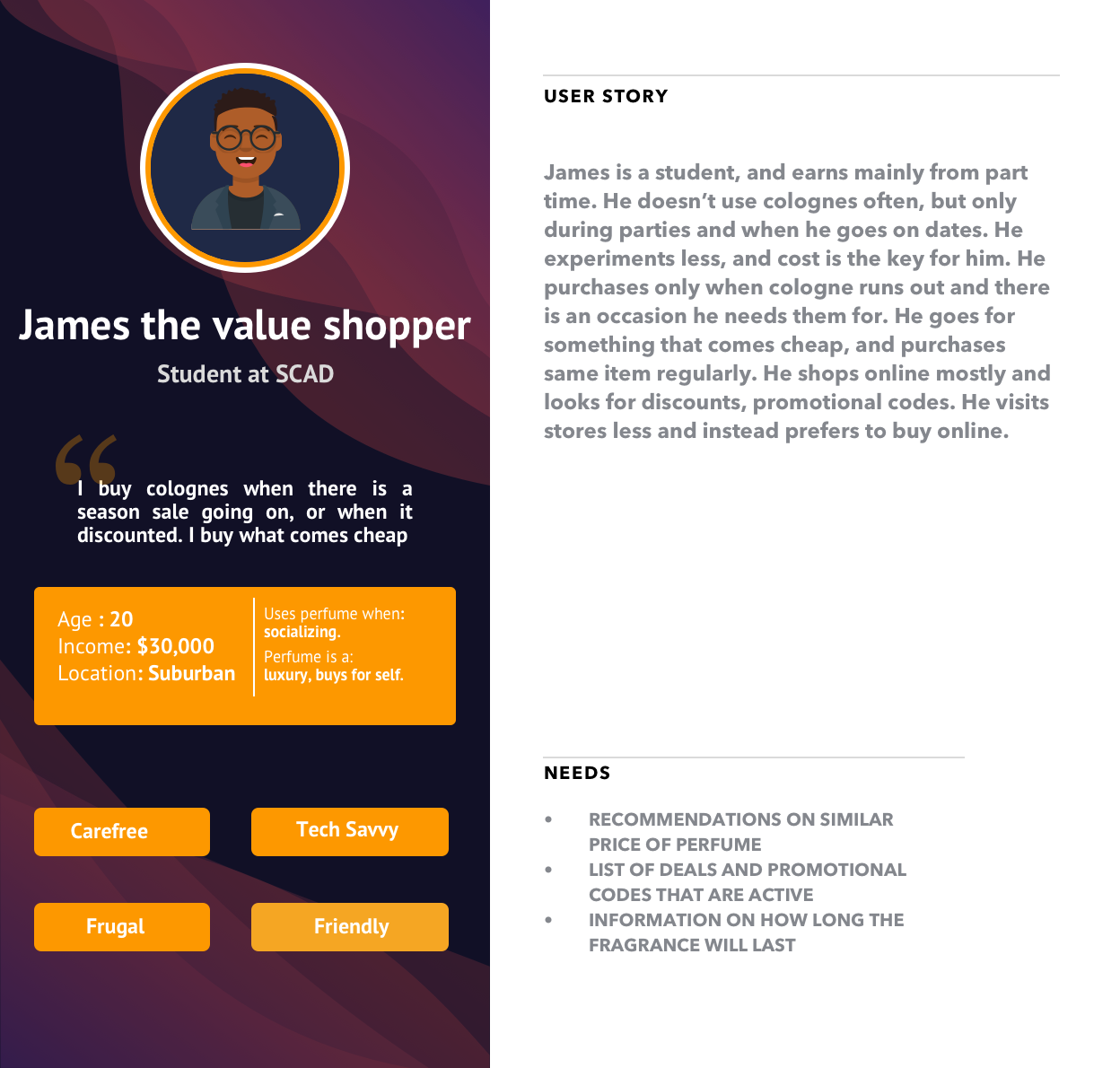

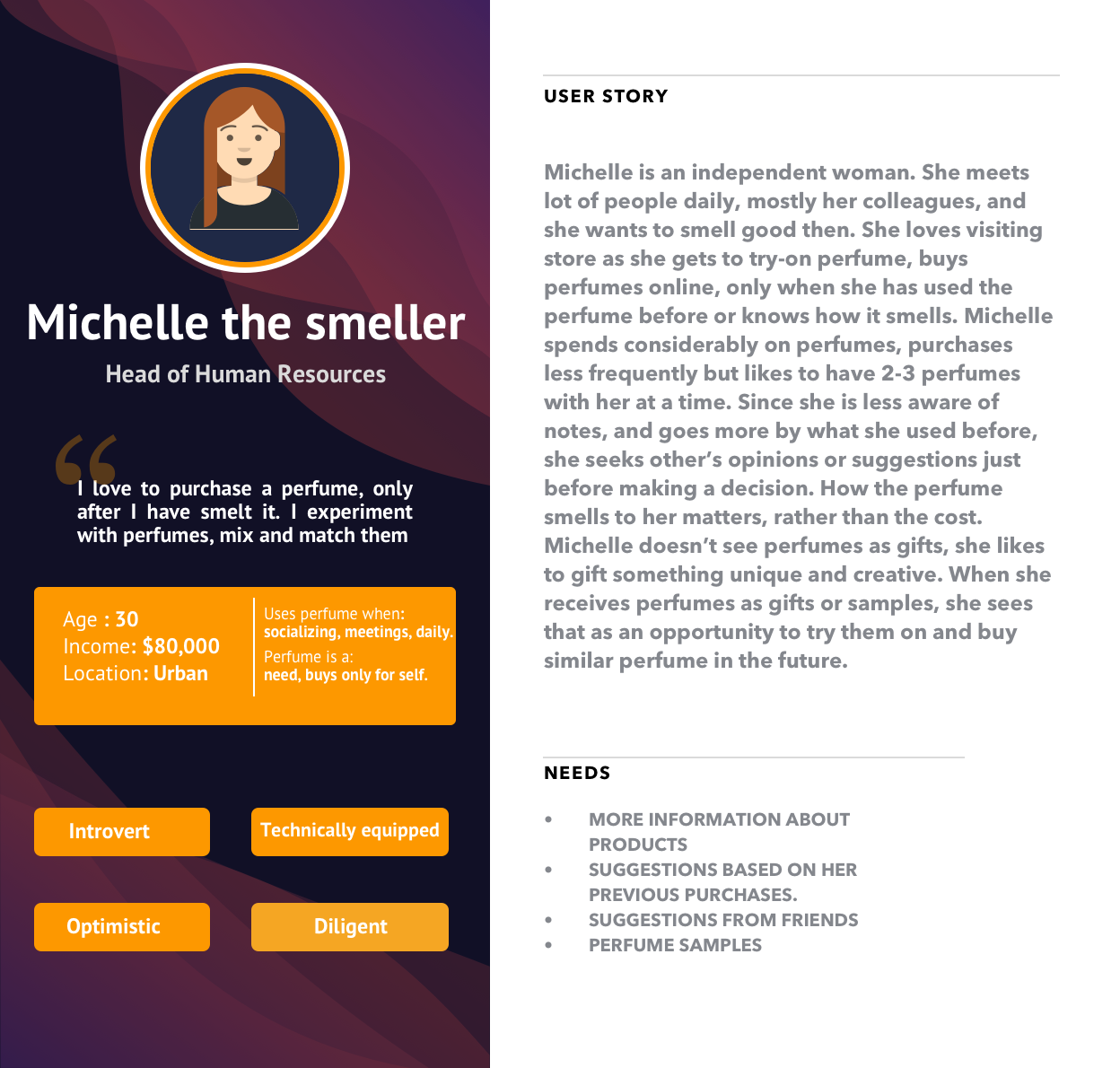

Personas

Based on user data we collected from our earlier research, a certain set of assumptions were made to construct basic structure of personas, based on the behavioral aspect of the customers. Later, in the project, we engaged in more detail with the users, through surveys, contextual interviews, data mining of reviews on the product page and various social media platforms. The data from these research methods enabled us to add more detail to the personas we had constructed.

Meet Emily! She is an expert perfume shopper. An actress by profession, she is well informed of new collections that launch and tries samples before buying them.

Learn about James. He is your ‘deals’ shopper and extremely frugal at spending on colognes. Although a social person, he visits stores less and instead prefers to buy online.

Michelle is a hardworking, successful and independent person . She loves visiting store as she gets to try-on perfume, buys perfumes online, only when she has used the perfume before or knows how it smells.

Emily, James and Michelle represent the majority of fragrance shoppers (customers) of Sephora (physical store), Sephora.com and Sephora mobile app.

Focussed Research - Cognitive Walkthrough, Survey, Contextual Interviews

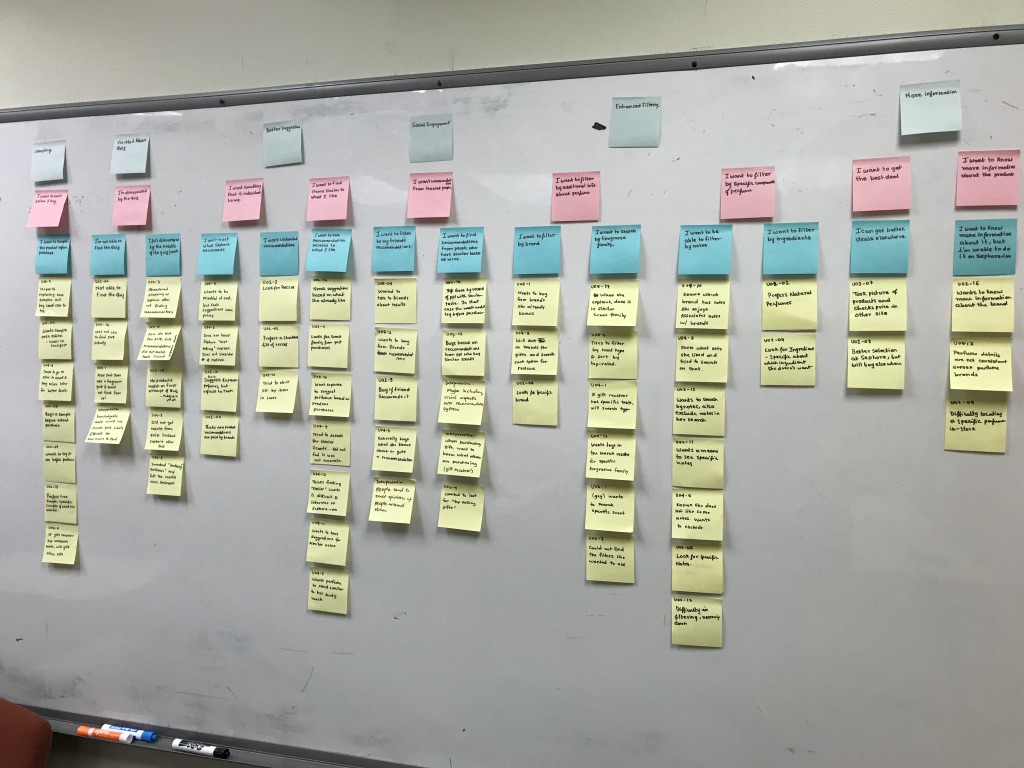

To better understand the online context in greater detail and derive specific user requirements, we did a cognitive walkthrough, conducted a survey, performed seven contextual interviews, and developed an affinity map.

RECRUITMENT

Recruitment occurred in Atlanta. I distributed our screener survey online through Facebook groups, Slack groups, and Reddit. I also recruited in-person by contacting some friends of my peers and networking .

SCREENER SURVEYS

I created a survey for fragrance buyers that over 120 people answered. This collected data on frequently experienced issues while buying perfumes and their medium of preference for buying perfumes. I also collected demographic information and the reasons why a user is interested to buy a perfume.

INDIVIDUAL INTERVIEWS

We conducted 8 in-person interviews.

Analysis - Affinity Mapping

Some limitations of this method, as we observed, that initial coding process was intensive and took time. We had to do that in four round/stages before a clear structure emerged. Identifying a common theme for higher level coding was also difficult, the data could be interpreted in more than way and we had to be careful to look at what coding would be closer to the data that we obtained from the contextual interviews. The population chosen for this method was a convenience sample, hence the results obtained from this method may not completely apply to the larger population. Hence, we have used other research methods as well (Survey, Interviews, Cognitive Walkthrough), and converged the findings, to reduce the effect of bias caused due to this method.

Key Findings from Affinity Map

Better filtering is needed to allow users to narrow results as they desire

Increased social interaction surrounding the perfume purchase is often sought

More targeted and personalized recommendations is required

Users need a better way to understand how the perfume truly smells

A less overwhelming and cognitively demanding interface is required

At a minimum, users require a more trusted method for validating suggestions and a better understanding of a perfume’s true scent. To address this problem, four key areas emerged surrounding sampling, filtering, personalization, and social engagement.

3. Ideation & Sketching

We held a brainstorming session, in which we came up with ideas that could potentially solve the issues for the user needs that we identified, and then delegated tasks among team members to come up with sketches for each the ideas we discussed.

Using findings from research, we began to construct mental models of proposed solutions. To truly determine if these solutions were feasible or even desirable, we had to get visual representations of these ideas in front of actual users.

Sketching

In order to complete this task, we used the four areas of focus as reference (sampling, filtering, personalization, and social engagement) and individually developed as many sketches as we could spanning these areas. We then did sketch review to aggregate our best ideas into full solutions pertaining to each of the four focus areas and did another round of sketching.

Evaluation

The evaluations were carried out in an ad-hoc fashion, with each member reviewing one of the four core areas with at least three potential users. We focussed on certain areas of the design for general impressions and perceived usefulness.

4. Prototyping

Wireframe

Sephora.com is an established ecommerce website. Given that they have an existing flow that is easy to navigate and understand, we decided to integrate our design solutions into the current navigation structure.

Sitemap for Wireframe

Wireframe

Prototype - Initial Version

Sitemap for Prototype

Interface Screens of Initial Prototype

Interactive Prototype

The link to the prototype http://vgemxt.axshare.com/#p=home.

(Password: sephora).

Evaluation

After agreeing on the correct questions to evaluate against our research goals, our team developed a single Excel spreadsheet to better standardize responses and aid in analysis. Then, each team member set out to conduct several interviews on their own. We tested our prototype with six users. As in the sketch testing, these users were geographically located participants.

5. Iteration

Prototype - Version 2

Based on the feedback received regarding the prototype that we designed, we have outlined changes to address positive and negative user feedback for inclusion in this 2nd iteration.

Try the prototype below, or at: https://invis.io/RJEJLBLC2

6. Usability Testing

Usability Evaluation - Usabilty Benchmarking, A/B Testing, Cognitive Walkthrough & System Usability Scale

Evaluation Technique - Usability Testing and Expert Inspections

To evaluate our designs, we decided to conduct user based and expert evaluations. By combining these two methods, we have a more robust understanding of usability concerns within our designs.

Usability Benchmarking Sessions - Benchmarking Taks, 6 Sessions

To understand how various users of our system would traverse our designs, we asked them to complete several tasks that spanned most new features and pages. To standardize the testing, we composed a script for the moderator to follow and constructed a data collection document to capture test details. For all questions, we asked the participants to think aloud while performing their tasks. We chose this method due to the rich data that is provided by understanding a user’s thought process.

Other Forms of Testing - A/B Testing, Task Load Index Measure & Capture of Quantitative Metrics

Along the Usability Benchmarking Sessions, we also conducted A/B Testing (multivariate testing) between our design and that of sephora.com. To avoid biasing participants to a particular design during A/B testing, we switched whether we showed Sephora’s current design or our design first. After every task in the A/B testing, users would be handed a NASA TLX questionnaire to complete before moving on to the next task. Other quantitative measures like time to completion, number of clicks etc were captured for tasks performed both on our design and that of sephora.com.

Usability Expert Inspections - 3 Cognitive Walkthrough Sessions

By using experts, we are able to focus more of our efforts on usability of task flows and design elements that are potentially not addressed by the common user. We conducted 3 cognitive walkthrough sessions with usability experts (our peers). The experts were given the same tasks that were designed for the users. While the expert would perform tasks, and identify issues in our prototype, a member of our team acted as an observer and took notes. For each sequence/subtask, the expert would identify issues as they tried to perform the task. The expert would then provide some recommendations/suggestions or discuss about other additional problems on the interface and share some insights with the observer.

System Usability Scale (SUS)

The System Usability Scale (SUS) is a set of generalized industry standard questions to test the usability of a system. We found applying the SUS to evaluate our prototype very relevant in measuring the usability of our prototype.

The SUS questions collected self-reported metrics of the users. Through these questions, we measured ease of use, learnability, effectiveness, performance and satisfaction of the users towards our prototype.

6.2 Results

The SUS scale and other subjective data was graded on a scale of 1-5 (or 1-7 in case of TLX Scale), and hence the overall average of these reported metrics were calculated. Qualitative data obtained from cognitive walkthrough and think-aloud data from the usability testing sessions were coded in iterations and synthesized till they converged into themes.

SUS Scores

Each participant’s individual SUS scores were above the SUS score average (68) which shows that participants rated the system above average. Consequently, the overall average of each score was above the 68 value threshold. The overall average score from our participants was 82.9.

SUS Score Out of 100

Self Reported Metrics

Self Reported Metrics

Participants used a Likert scale to answer these questions from 1-5 where 1 being least likely or least confident etc. and 5 is most likely, very confident etc. Our interface fared better than Sephora for the questions comparing user’s confidence on how the fragrance would smell, and the likeliness to make a blind buy purchase (purchasing without smelling a fragrance).

Task Load Index

We were interested in capturing a standardized measure of usability. We chose NASA TLX for these tasks specifically because it focuses on mental load and frustration, which is a key concern when comparing two designs. Ultimately, NASA TLX is more directed at task performance than other standardized questionnaires which provide a more general overview of usability for a system. Participants used a 7-point scale to answer these questions from 1-7 where 1 being Very Low, and 7 being Very High. Our designs outperformed Sephora’s designs on all criteria.

Task Load Index

Average Task Completion Time

Task Completion Time

To analyze our results, we looked at the raw individual times and then compared the average times across participants. Our Interface fared better in terms of less time taken to complete the tasks than on Sephora.com.

Given that we recorded these times while users were thinking aloud, this may have skewed our results. However, if operating under the assumption that that are more verbose will explain similar amounts for each task, then it is still a valid measure of performance.

Cognitive Walkthrough

The experts were in agreement that the first, third and fourth task, purchasing a sample of Gabrielle, taking the Fragrance IQ Quiz and picking the top recommendation, and excluding orchid from the filters was easily navigated and understood by users. The second task, identifying similar scents, experts felt was less clear. The concerns with the first three tasks was mostly on the visibility of the functionality, while one of the experts felt that the exclude functionality of the filters needed some time to understand. Given that, for a company that has so many products and options to list, visibility of a particular menu item or a filter is a challenge, and this was reflected by the comments the experts made.

Positives & Scope for Improvement - Rose, Buds & Thorns Method

Affinity Map

Based on the data collected from participant’s think aloud, we did an analysis by applying Rose Buds and Thorns (RBT) methodology to the affinity mapping.

RBT has a fixed 3 categories of top level labels, while all the lower level items are constructed through a bottom-up approach.

i. Rose/ Positive Experience/Gain points: These are the features, and things that users liked in an interface.

ii. Thorns/Negative Experience/Pain points: These are the features and things that users didn’t like, were annoyed of, frustrated at or something that resulted in a not so good experience to the user.

iii. Buds/Opportunities to Consider: These are features that are essentially not part of the interface or something that is intended, but leveraging them could result in a better experience to the user.

Positive Experiences – Features that Users liked

1. Pros and Cons in Product Listing (2 users)

2. Quick View (2 users)

3. Filtering by perfume components, perfume size (2 users)

4. Recommendations on Social page, reviews on social page (3 users)

5. Able to find quiz, from a place they expect the link to be (4 users)

6. Sample page (1 user)

7. Similar fragrances related to what the user is viewing (2 users)

8. Interface being better, overall compared to Sephora. (4 users)

9. Quiz located easily on our design with less effort, but the same task difficult on Sephora.com (4 users)

Negative Experiences – Things that Users had troubles, annoyances

1. Quiz did not ask for budget, size of the bottle (minor, only 1 user had this issue)

2. Difficulty in locating the social page (severe, 4 users faced this issue)

3. Difficulty in finding the page, that suggests similar perfumes (moderate, only 2 users had issue)

6.3.3 Opportunities to Consider

1. User doesn’t trust the recommendations of similar perfume, search results for filter by longevity. (minor, only 1 user had this issue)

2. Users find search bar more intuitive to use, compared to the filters (major, 4 users)

3. Users find the similar perfumes suggestions on quick look card than on product page (major, 4 users)

4. Users spot the Fragrance IQ Quiz link, more under the fragrances sub menu of Shop menu. (major, 3 users)

Limitations

Research Participants

The majority of our participants were from Atlanta, which is not necessarily representative of general Sephora customers. Most of our participants were urban residents and were either residing or working at a place close to Sephora physical outlets.

Bias

Few participants of our study had acquaintances or well established relation with some of our team members. This may have resulted some degree of bias in the results of our usability tests. However, we tried to minimize the bias by incorporating random sampling.